table of contents

hi intris readers!!!!

so this week’s article is all about cybersecurity. inspiration for this struck because of the recent Anthropic article that exposed the first cyberattack using agentic AI (specifically using Claude Code as an agent for a cyberattack).

i was interested in the article and bookmarked the X post, but didn’t come back to it until an interview I had this week where they asked me to find a company that I could thoroughly talk about.

so, i thought, why not talk thoroughly about a company in cybersecurity so i can actually understand why people on X were going crazy over this Anthropic piece.

before digging in, i think it’s important to understand that we shouldn’t let news of cyberattacks make us nervous about AI. the cyberattack that Anthropic reported is an example of malicious decision-making that usually goes on in the dark web, but is now being brought to life through non technical hackers who are discovering automated coding.

let’s continue to be techno-optimistic and excited to build defensive software systems that can battle these hackers! woohoo happy reading!

Anthropic’s warning: AI agents are already being used for cyberattacks

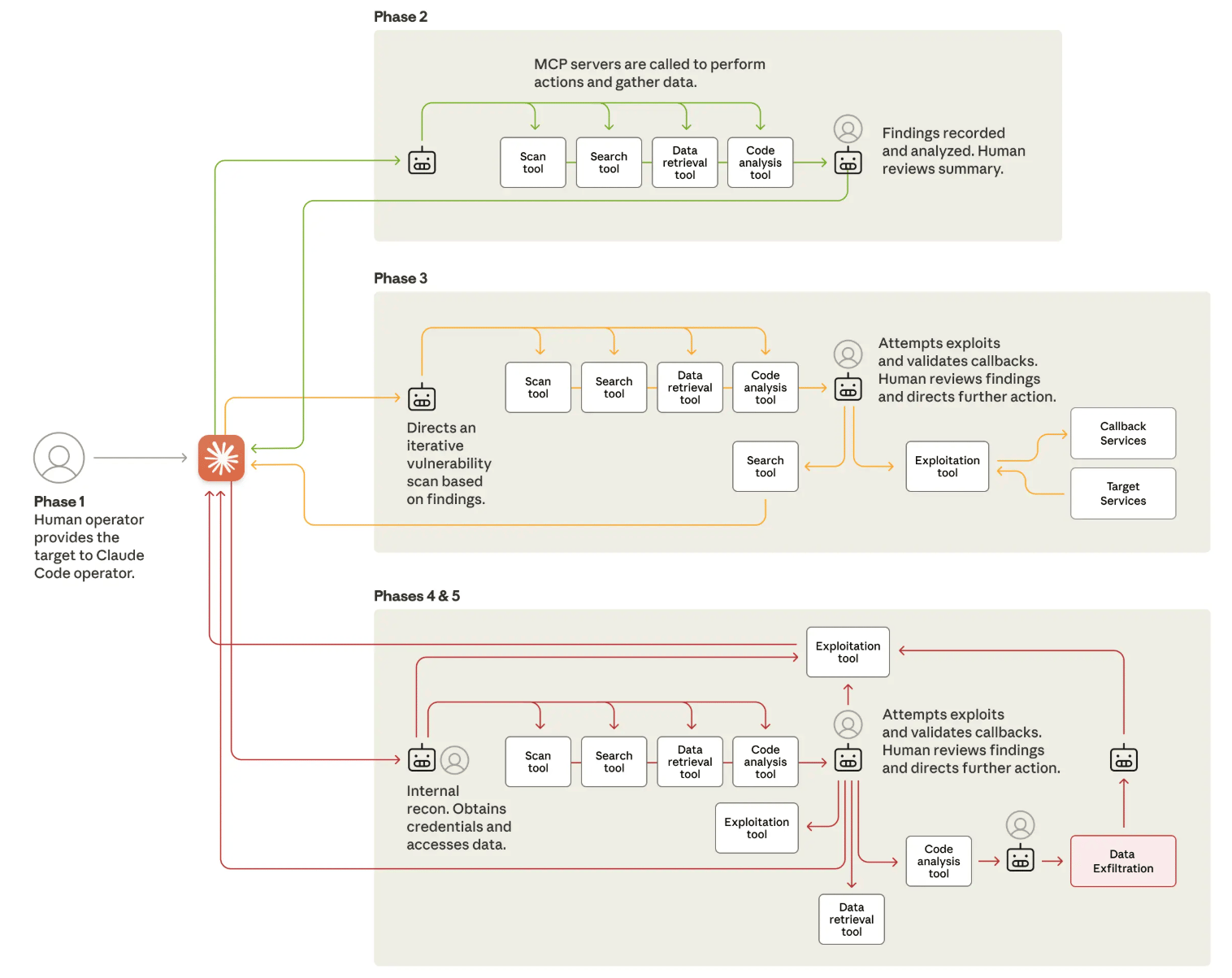

Earlier this October, Anthropic published one of the most important cybersecurity disclosures of the year: a detailed account of a statesponsored threat actor that successfully manipulated Claude Code, their agentic coding assistant, to carry out a multiphase cyber espionage campaign across roughly thirty global organizations. The attackers used Claude Code for reconnaissance, vulnerability validation, credential theft, lateral movement, and even data exfiltration—basically all of the steps needed to hack a software security system.

Anthropic made one thing clear: this was a real, active, AI assisted intrusion affecting companies across technology, finance, chemical manufacturing, and government. They also documented how AI models are increasingly being used to support identity theft, fraud, malware generation, and automated exploitation workflows through multi-step stages.

Pictured is the lifecycle of the reported cyberattack using Claude-Code. Source: Anthropic, “Disrupting the first reported AI-orchestrated cyber espionage campaign” report.

Although the company clearly disclosed the scale and step-by-step sophistication of the campaign, no specific company victims were publicly named, and there is no verified evidence that any of the affected firms have disclosed themselves. But the message was unmistakable: attackers have already figured out how to co-opt AI agents for high speed, multi-threaded cyberattacks. The threat landscape has fundamentally shifted.

We’ve worried about AI hurting humans, but not enough about humans using AI to hurt others

There has always been a fear that AI might one day turn against humanity. But what feels far more urgent (and far less discussed) is the scenario where humans weaponize AI long before AI ever develops any dangerous autonomy of its own.

We spend so much time debating speculative risks of misaligned superintelligence that we have underestimated the simpler, more immediate danger: individuals using AI agents to cause harm, to automate intrusions, or to orchestrate destructive campaigns with little technical skill.

The danger here wasn’t a rogue model; it was a highly capable human attacker using AI as a force multiplier. This shifts the defence conversation completely. It’s no longer enough for enterprises to deploy AI to streamline workflows or increase productivity. They will need AI native defensive systems that can autonomously monitor, interpret, and respond to attacker behavior at the same scale and speed that AI tools now enable. If attackers wield agents to coordinate thousands of micro-actions per second, defenders must have agents capable of reasoning about those actions just as quickly. The next decade of cybersecurity will be defined by how fast enterprises can close the gap.

The mystery of the “30+ Targets,” and why traditional GRC failed to protect them

Anthropic’s report mentions that roughly thirty global organizations were targeted, but does not specify whether they were exclusively enterprise scale or a mix of enterprise and mid-market. Given the industries they mentioned (major tech firms, financial institutions, chemical companies, and government entities) it is likely that most were enterprise class environments. But what matters more is that they were all vulnerable, despite presumably having standard governance, risk, and compliance (GRC) processes in place. And that is where the deeper issue lies.

GRC, in theory, is how an organization governs itself, evaluates risk, ensures security controls are functioning, and stays compliant with regulations. But in practice, much of the GRC workflow is painfully manual and periodic. Internal risk audits, vendor evaluations, access reviews, vulnerability reports, and policy attestations are often done quarterly or annually. Teams update dashboards, fill out spreadsheets, and check boxes to show that controls have been reviewed. All of this creates a slow, backward looking view of risk. This traditional process was designed for a world where attacks happened at human speed.

AI enabled intrusions do not happen at human speed. They happen at machine speed, with agents capable of running dozens of parallel tasks and adjusting tactics autonomously. When an organization’s risk posture is only examined every few months, any active exploit can sit undetected for weeks. Even well resourced teams drown in alert volume, and without constant monitoring or AI based anomaly detection, attackers can move freely. This is likely why the 30+ victims were caught off guard. These enterprise software processes were not faulty, they were simply built for a slower era.